02.24.08

Posted in robobait at 11:28 am by ducky

I always forget how to do this, and it is incredibly handy…

I frequently want to munge a bunch of files up, and afterwards, change its extension. So for example, I want to do something like

cat a.xml | ./xml2html > a.html

cat b.xml | ./xml2html > b.html

(etc)

It is really easy to just tack something onto the end in bash:

for x in *.xml; do echo $x; cat $x | ./xml2html > $x.html; done

but that will give me ugly files like a.xml.html, b.xml.html, etc.

The basename command to the rescue!

for x in *.xml; do echo $x; cat $x | ./xml2html > `basename $x .xml`.html; done

(Note1: I like to always to an echo $x as the only part of the for loop to make sure I’m only getting files I mean to.)

(Note2: Yes, yes, xargs is apossibility, but I don’t know off the top of my head how to keep the pipe in the command executed by xargs, and not have the pipe get the output of the xargs. Maybe someday I’ll figure out how to do that and post a robobait on that as well.)

Permalink

02.21.08

Posted in Hacking, Random thoughts at 9:05 pm by ducky

Paul Ramsey posted recently that open source was funded by four basic types:

- The altruist / tinkerer

- The service provider

- The systems integrator

- The company man

He continued by saying, “Notably missing from this list is ‘the billionaire’ and ‘the venture capitalist’.”

I have to quibble a little bit.

Mitch Kapor personally financed (most of) the Open Source Applications Foundation, which pumped quite a bit of money into open source projects.

Obviously a lot of it went into the Chandler project, but some went into various framework projects in order to make them work well enough to use. For example, OSAF supported someone full-time for several years to improve the Mac version of WxWidgets.

There is also a fair amount of work done by people who made a ton of money and are now tinkering. I know personally a few people who made boodles on IPOs, retired, and now spend their time on open-source projects. While you could say that these are in Paul’s “tinkerer” class, these people can invest a lot more energy than someone on nights and weekends is likely to.

I will acknowledge, however, that the fraction of open source work financed by rich folks is probably small.

However, I think Paul is missing some significant sources of open source financing. Paul’s definition of “company man” talks of people who have a little bit of discretionary time at their work. Maybe it’s different outside of Silicon Valley, but I don’t know many people who have much discretionary time at work. On the other hand, there are a fair number of people whose work leads them to contribute to open source in the course of their work.

- Sometimes they use an open-source project in their work, find that it has some bugs that they need to fix to make their project work, and contribute the fixes back. This is self-interest: they would rather not port their fixes into each new rev of the open-source project. My husband said that his former company contributed some fixes for Tk for this reason.

- There are a few companies who use a technology so much that they feel it worthwhile to support work on that technology. Google pays Guido von Rossum’s salary, for example.

- I don’t know what category to put Mozilla into, but it makes money from partnerships (i.e. Google) to finance its development.

- There is a non-zero amount of money that goes into open source — directly or indirectly — from governments and other non-profit funding agencies. For example, the Common Solutions Group gave a USD$1.25M to OSAF; the Mellon Foundation gave USD$1.6M. This made perfect sense: it is far cheaper for university consortia to give OSAF a few million dollars to develop an open-source calendar than it is to give Microsoft tens of millions every year for Exchange.

- While I don’t have hard data, I bet that a fair amount of open-source work gets done at universities. All of my CS grad student colleagues work with open source because it’s cheap and easy to publish with. While they might not release entire projects, I bet I’m not the only one who has fed bug fixes back to the project.

Permalink

02.20.08

Posted in Art, Random thoughts at 10:05 pm by ducky

BTW, one of the things that I love about living at Green College is that there is always someone around who knows the answer. Over the weekend, I got fixated on a big empty wall at our host’s place, and decided that it needed a huge forgery of a medieval map. But it needed to be BIG to fit the wall.

Medieval maps tend to be about the size of a sheepskin because, well, vellum was made from sheepskins. They just didn’t have six foot sheep.

I started wondering what kind of story you could make up about how it was so big. Elephant parchment? And this got me to wondering how big a piece of leather you could get from an African elephant… so this morning I asked Jake at breakfast. Jake tracks elephants in Kenya, of course. (Don’t you routinely have breakfast with elephant trackers?)

Based on his estimates, I could get a rectangular piece around six feet by twelve feet.

Jake also told me that they make paper out of elephant dung. Elephants, not being ruminants, pass fiber through undigested and in great form for making paper. Even better for my medieval map forgery!

Update: While it isn’t hugely common, leather is made even today of sealskin and walrus skin, so presumably you could make parchment out of it as well. Walruses are about 3m long; the biggest elephant seal on record was almost 7m long.

I found some evidence that Canadians successfully made leather out of the skin of white (beluga) whales. Belugas measure up to 5m long. If the skin of belugas is similar to that of blue whales, (32m long), then it seems like the maximum size of a piece of parchment is probably around 30m long. That’s one big piece of parchment!

Permalink

02.11.08

Posted in programmer productivity at 11:46 pm by ducky

In this post, I introduced the idea of writing down three hypotheses for what a bug might be. In this recent post, I theorized that writing them down might reduce confirmation bias. This was all just conjecture, however.

I just discovered some confirmation in Klahr, D. and K. Dunbar (1988). Dual space search during scientific reasoning. Cognitive Science 12, 1-55. They did one experiment where they gave people a programmable toy and asked them to figure out the semantics of one of the programming keys. They then did a second experiment where they asked a new set of subjects to come up with as many hypotheses as possible for what the key did, and then gave them the toy and asked them to figure out the semantics of that key. The subjects were much better/faster at figuring it out after verbalizing a bunch of hypotheses than without verbalizing. It took the first set more than three times as long to figure it out than the second set.

Now, I don’t think this paper says how long people spent on coming up with hypotheses — all the PDFs I found of this paper were missing pages — but it would seem odd if it was more than the time saved and they wouldn’t mention it.

Permalink

02.08.08

Posted in programmer productivity, robobait at 12:34 pm by ducky

Frequently, I use the robobait tag to say, “this is something that I know right now that I want to be able to find again in the future”. This robobait tag, by contrast means, “This is something that I think more people should know about. Yo, search engines! Give this page a higher ranking!”

Evan Robinson wrote an article about output as a function of hours per week that draws upon historical research. It says that working a lot is a bad idea.

Permalink

02.07.08

Posted in Politics, Random thoughts at 11:38 pm by ducky

Mitt Romney dropped out of the US presidential race today. That pretty much guarantees that the next president will be one of John McCain, Hillary Clinton, or Barack Obama.

This pretty much guarantees that my country’s leadership will cease accepting torture on 20 January, 2009!

That realization made me very, very happy.

Now we just have to get my fellow citizens to cease accpting torture…

Permalink

02.06.08

Posted in programmer productivity at 9:27 pm by ducky

This is a somewhat expanded version the talk I’m giving to the Vancouver Software Developers’ Network tomorrow. I don’t think there’s is much that I haven’t said in previous blog postings, but I wanted to gather it all in one place.

“What is the variability between programmers?” is question I was curious about when I started my MS CS at UBC. In Silicon Valley, I’d heard a rule of thumb that there was a 100-to-1 difference in programmer productivity. My husband had heard ten-to-one; Joel on Software quotes one of his old profs to suggest that it is ten-to-one or larger.

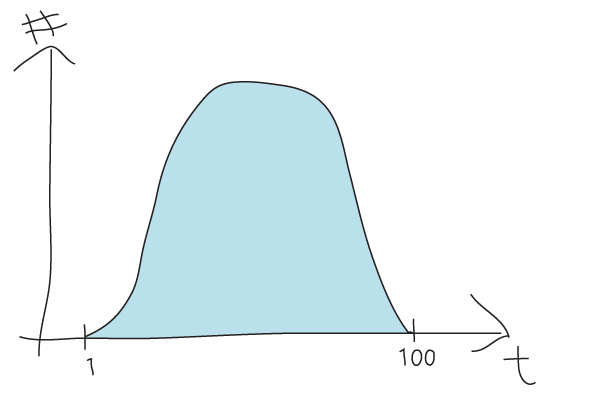

Here is a drawing (deliberately crude, so nobody would think it represents actual data) of what I thought the histogram would look like of the number of programmers that finish a task in a certain time on the y-axis, and the time it takes on the x-axis.

The first problem here is that this just measures time, not quality. It is presumably faster to write lousy code than to write code that is clean, easy to read, easy to maintain, etc. That is a legitimate issue, but unfortunately my reading of peer-reviewed papers has not convinced me that anybody really knows how to measure quality.

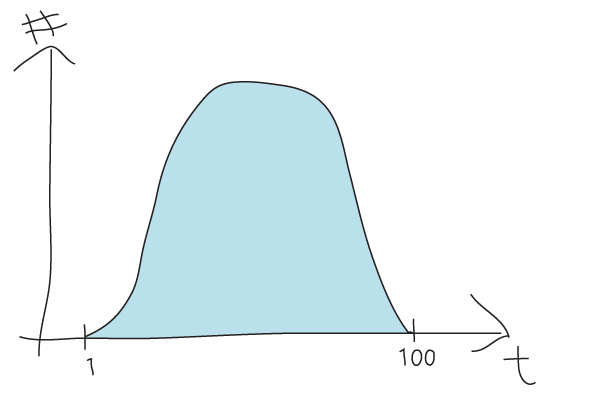

There are some people who have measured coding speed, however. I have reported previously on experiments by Demarco and Lister, Dickey, Sachman, Curtis, and Ko which measure the time for a number of programmers to do a task. What I found is that the time histogram curve actually looks like this:

Observations:

- The worst programmer isn’t 100 or 10 times slower than the best, the worst programmer — found at the end of a very long tail — is infinitely worse. If you think about it, I am going to be infinitely faser than a walrus. It is hard to program with those flippers. (I actually worked on a project with a contractor who, after a year, was let go because despite repeated requests, he had not checked in a single line of his code. I think that counts as infinitely slow.)

- The median programmer is about two to four times slower than the fastest on single tasks. (Because of regression to the mean, this advantage should get smaller with many tasks.)

- The curve is wickedly shifted to the left. This makes sense: there isn’t much you can do to get faster, but a LOT of things you can do to get slower. (Not ever check in your code, for example.)

What implications does this curve have?

- Don’t spend a lot of effort to hiring the absolute best; spend lots of effort to avoid hiring losers.

- Don’t spend a lot of effort to learning how to type faster; spend lots of effort to figure out how to avoid getting stuck.

“Don’t get stuck” is easier said than done, of course, but there are things you can do.

- When you have a question — e.g. “Why is Foo set to 3 instead of 5?” — write down three hypotheses for what the answer might be. This can help you avoid confirmation bias. I came up with this idea after reading that breadth-first-ish approaches to problems are more successful than more depth-first-ish searches. I don’t have any research on it, but writing three hypotheses helps me a lot.

- Explain your question/problem to someone. It doesn’t even need to be someone who knows anything about coding. While there is little academic research on verbalizing, there are lots of anecdotes on it being helpful to verbalize. Anecdotes say that “rubber ducking” is useful, and that has been my experience as well. Verbalizing might also be part of why I find writing down three hypotheses so useful.

- Ask for help! Someone familiar with the particular area that you are investigating might be harder to find than a rubber duck, but sometimes can be more useful.

- Note to managers: your new hires will probably feel uncomfortable interrupting you to ask for help. Instead of making them come interrupt you, go to them. Once or twice per day, stop by their office and spend some time with them. Ask to see what they are doing; pair program with them for a little while. When they are new, you are more likely to catch them being stuck than not being stuck, so you can proactively un-stick them. Even if they are not stuck, you can still probably give good pointers on tools and techniques.

- Use tools to help you find the answers to your questions! There are all kinds of great tools available now that can help you answer questions.

- Omniscient debuggers: Debuggers like odb and undoDB keep track of every variable’s state change and then let you trace backwards to where that variable changed state. (Note: Cisco also made an Eclipse plugin for omniscient debugging in C++, but for internal use only.)

- Many code coverage tool will also color lines based on whether they were executed or not. This is a cheap way to see which execution paths were taken! Examples include Visual Studio, the Intel C++ Code Coverage Tool, and the Eclipse plug-in EclEmma.

- One of the questions that I frequently ask is, “How do I get information from class Foo over to class Bar?” Prospector and Strathcona can help with that. Strathcona looks for examples of existing code in your code base that gets you from Foo to Bar; Prospector looks for existing code, and also traverses the tree of classes that can be reached from a given class to answer that question.

- Use tools to keep you from having to get stuck in the first place.

- Findbugs looks for code that “looks funny” and which is likely to have errors in it.

- JML allows the user to specify all kinds of “contracts” about how a method will work — preconditions, post-conditions, invariants, etc — in a very rich way. If anybody breaks those contracts (e.g. by passing illegal arguments), it gets flagged. It sounds like it would be really tedious to generate all those promises, but the tool Daikon can help. Daikon can generate promises based on actual run data; if something changes to violate the promises, it will flag it. (The contracts also work as extra documentation.)

Permalink

02.01.08

Posted in programmer productivity at 11:31 am by ducky

Whoa dude!

I was looking for how to set a breakpoint on a field in Eclipse, i.e. any time that field gets set, break into the debugger. I stumbled into something else.

In the Variables pane of the debugger, if you right-click on a variable, one of the (many) options is All Instances; another is All References. They appear to show a list of all instances of a particular class and all the objects that refer to that instance. Oh wow! That would have been so useful!

And for my record, the way to set a breakpoint on a field modifications is to

- find the field declaration,

- set a breakpoint on that line,

- go to the Breakpoints pane,

- right-click on that breakpoint,

- select Breakpoint Properties, and

- uncheck the Field Access box

Permalink