08.15.09

Progress! Including census tracts!

It might not look like I have done much with my maps in a while, but I have been doing quite a lot behind the scenes.

Census Tracts

I am thrilled to say that I now have demographic data at the census tract level now on my electoral map! Unlike my old demographic maps (e.g. my old racial demographics map), the code is fast enough that I don’t have to cache the overlay images. This means that I can allow people to zoom all the way out if they choose, while before I only let people zoom back to zoom level 5 (so you could only see about 1/4 of the continental US at once).

These speed improvements were not easy, and it’s still not super-fast, but it is acceptable. It takes between 5-30 seconds to show a thematic map for 65,323 census tracts. (If you think that is slow, go to Geocommons, the only other site I’ve found to serve similarly complex maps on-the-fly. They take about 40 seconds to show a thematic map for the 3,143 counties.)

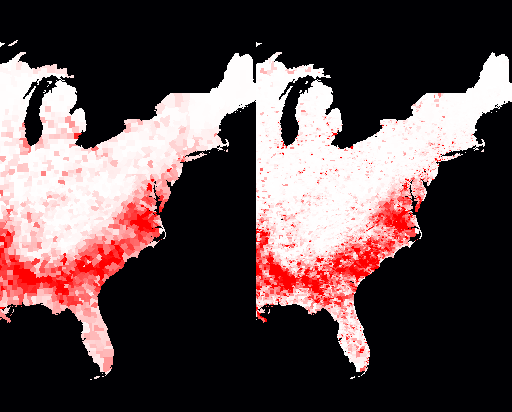

A number of people have suggested that I could make things faster by aggregating the data — show things per-state when way zoomed out, then switch to per-county when closer in, then per-census tract when zoomed in even more. I think that sacrifices too much. Take, for example, these two slices of a demographic map of the percent of the population that is black. The %black by county is on the left, the %black by census tract is on the right. The redder an area is, the higher the percentage of black people is.

You’ll notice that the map on the right makes it much clearer just how segregated black communities are outside of the “black belt” in the South. It’s not just that black folks are a significant percentage of the population in a few Northern counties, they are only significantly present in tiny little parts of Northern counties. That’s visible even at zoom level 4 (which is the zoom level that my electoral map opens on). Aggregating the data to the state level would be even more misleading.

Flexibility

Something else that you wouldn’t notice is that my site is now more buzzword-compliant! When I started, I hard-coded the information layers that I wanted: what the name of the attribute was in the database (e.g. whitePop), what the English-language description was (e.g. “% White”), what colour mapping to use, and what min/max numeric values to use. I now have all that information in an XML file on the server, and my client code calls to the server to get the information for the various layers with AJAX techniques. It is thus really easy for me to insert a new layer into a map or even to create a new map with different layers on it. (For example, I have dithered about making a map that shows only the unemployment rate by county, for each of the past twelve months.)

Some time ago, I also added the ability for me to specify how to calcualte a new number with two different attributes. Before, if I wanted to plot something like %white, I had to add a column to the database of (white population / total population) and map that. Instead, I added the ability to do divisions on-the-fly. Subtracting two attributes was also obviously useful for things like the difference in unemployment from year to year. While I don’t ever add two attributes together yet, I can see that I might want to, like to show the percentage of people who are either Evangelical or Morman. (If you come up with an idea for how multiplying two attributes might be useful, please let me know.)

Loading Data

Something else that isn’t obvious is that I have developed some tools to make it much easier for me to load attribute data. I now use a data definition file to spell out the mapping between fields in an input data file and where the data should go in the database. This makes it much faster for me to add data.

The process still isn’t completely turnkey, alas, because there are a million-six different oddnesses in the data. Here are some of the issues that I’ve faced with data that makes it non-straightforward:

- Sometimes the data is ambiguous. For example, there are a number of states that have two jurisdictions with the same name. For example, the census records separately a region that has Bedford City, VA and Bedford County, VA. Both are frequently just named “Bedford” in databases, so I have to go through by hand and figure out which Bedford it is and assign the right code to it. (And sometimes when the code is assigned, it is wrong.)

- Electoral results are reported by county everywhere except Alaska, where they are reported by state House district. That meant that I had to copy the county shapes to a US federal electoral districts database, then delete all the Alaskan polygons, load up the state House district polygons, and copy those to the US federal electoral districts database.

- I spent some time trying to reverse-engineer the (undocumented) Census Bureau site so that I could automate downloading Census Bureau data. No luck so far. (If you can help, please let me know!) This means that I have to go through an annoyingly manual process to download census tract attributes.

- Federal congressional districts have names like “CA-32” and “IL-7”, and the databases reflect that. I thought I’d just use the state jurisdiction ID (the FIPS code, for mapping geeks) for two digits and two digits for the district ID, so CA-32 would turn into 0632 and IL-7 would turn into 1707. Unfortunately, if a state has a small enough population, they only get one congressional rep; the data file had entries like “AK-At large” which not only messed up my parsing, but raised the question of whether at-large congresspeople should be district 0 or district 1. I scratched my head and decided assign 0 to at-large districts. (So AK-At large became 0200.) Well, I found out later that data files seem to assign at-large districts the number 1, so I had to redo it.

None of these data issues are hard problems, they are just annoying and mean that I have to do some hand-tweaking of the process for almost every new jurisdiction type or attribute. It also takes time just to load the data up to my database server.

I am really excited to get the on-the-fly census tract maps working. I’ve been wanting it for about three years, and working on it off and on (mostly off) for about six months. It really closes a chapter for me.

Now there is one more quickie mapping application that I want to do, and then I plan to dive into adding Canadian information. If you know of good Canadian data that I can use freely, please let me know. (And yes, I already know about GeoGratis.)

Tamfang said,

December 2, 2010 at 12:53 pm

The visual message might be even better if the map conveyed density as well as ratio, by showing Black pop.density in red and nonBlack pop.density in green against a black background. This is such an obvious idea that I’ve only ever seen it used once, several years ago, in a map of households with and without DSL access (or some such).